Concepts#

This concepts guide is an introduction to the concepts that make Covalent unique as a workflow management system for machine learning experimentation. The guide has two parts.

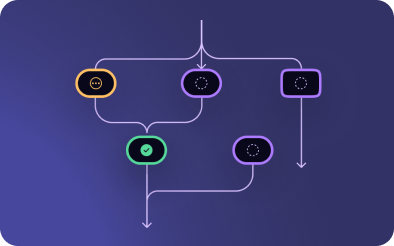

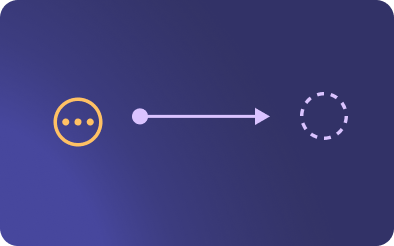

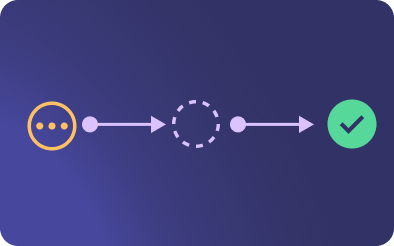

The first part, Covalent Basics, introduces the key code elements that make up Covalent. These elements are the building blocks of Covalent workflows:

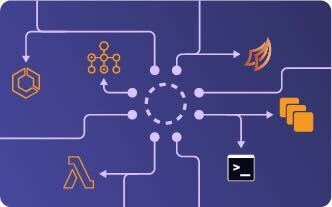

The second part, Covalent Architecture, outlines the three main parts of the Covalent architecture and introduces the in-depth descriptions that follow: